What is the Duration Simulator?

https://docs.convertize.io/fr/docs/simulateur-de-duree/Wait until your results reach statistical significance

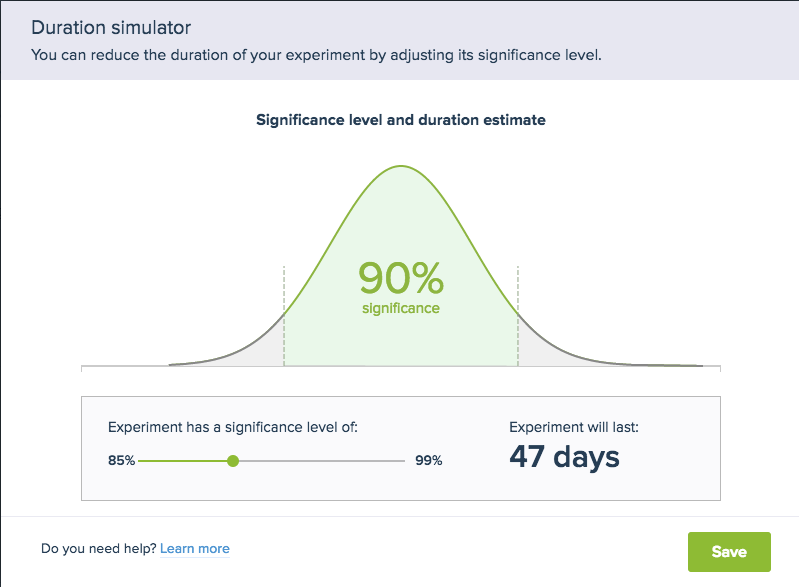

The duration simulator allows you to alter the length of your experiment by modifying the statistical significance level. This is the measure of how confident you can be in your final results.

Longer tests with more visitors provide results that are more reliable; our Duration Simulator calculates how long a test must run to achieve the desired statistical significance. See the example below.

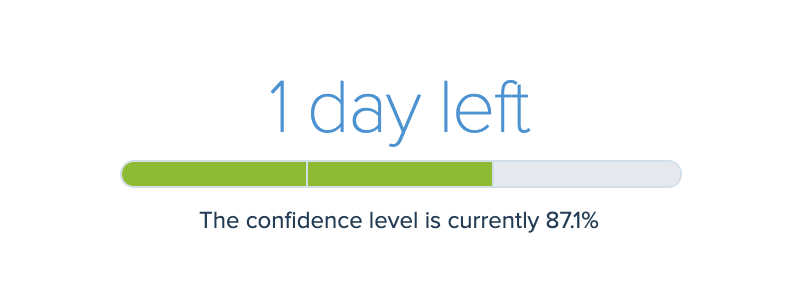

This test currently has a confidence level of 87.1%. This indicates that you can be 87.1% sure the results are accurate. Conversely, there is a 12.9% chance that the conclusion is unreliable. Running a longer test will reduce this level of uncertainty. Convertize allows you to set any significance between 85% and 99%.

Please note: the Duration Simulator is only available when:

1. The experiment has been running for at least 7 days (is beyond the Warmup stage) and

2. The experiment hasn’t completed and

3. The significance level is greater than 88% and

4. The estimated duration is more than 60 days.

However, once you have set a custom significance level, a link to the simulator will always be available to alter it regardless of these conditions.

What is statistical significance?

To understand this measure, we have to delve into how statistics are used to draw conclusions from testing. When users run an AB test, Convertize receives data from all visitors to your website. We then use that information to confirm or deny our hypothesis about all future visitors.

Let’s say we run a test with the hypothesis: “Having a bigger ‘Buy Now’ button will increase my conversion rate.” We ten use our sample (all visitors for the duration of the test) to work out whether a bigger “Buy Now” button increases conversions.

Because a hypothesis is an educated guess, it is susceptible to error. in the graph below, the likelihood of error is represented by the greyed out sections. If you see a result that is unexpected – say that a bigger “Buy Now” button leads to a lower conversion rate – it could be a result outside of the significance level of 85%.

But if you adjust the significance level to 95%, the widely accepted standard for significance, the chance of an incorrect result is reduced whilst the length of the experiment increases.

What should I set my statistical significance to?

The default setting for each experiment is 95%, and this is the level of statistical significance we recommend. However, websites with a higher amount of traffic will reach this level more quickly than those with a relatively low number of daily visitors.

Therefore, you might opt for a lower significance level in situations where you want to get quicker results.